Social media manipulation affects senators, report shows

BRUSSELS—Theconversation taking place around two U.S. senators’ verified social media accounts remained vulnerable to manipulation through artificially inflated shares and likes from fake users, even amid heightened scrutiny in the run up to the U.S. presidential election, an investigationbytheNATOStrategic Communications Centre of Excellence found.

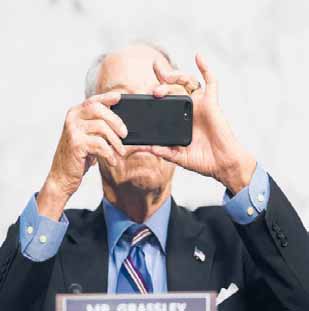

Researchers from the center, a NATO-accredited research group based in Riga, Latvia, paid three Russian companies about $370tobuy337,768fakelikes, views and shares of posts on Facebook, Instagram, Twitter, YouTube and TikTok, includingcontentfromverifiedaccountsofSens.

Chuck Grassley and Chris Murphy.

Grassley’s office confirmed that the Iowa Republican participated in the experiment. Murphy, a Connecticut Democrat, said in a statement that he agreedtoparticipatebecause it’s important to understand howvulnerableevenverified accounts are.

“We’ve seen how easy it is for foreign adversaries to use social media as a tool to manipulate election campaigns and stoke political unrest,” Murphy said.

“It’s clear that social media companies are not doing enough to combat misinformation and paid manipulation on their own platforms and more needs to be done to prevent abuse.”

In an age when much public debate has moved online, widespread social mediamanipulationnotonly distortscommercialmarkets, it is also a threat to national security, NATO StratCom director Janis Sarts said.

“Thesekindsofinauthentic accounts are being hired to trick the algorithm into thinking this is very popular information and thus make divisive things seem more popular and get them to more people. That in turn deepens divisions and thus weakens us as a society,” he explained.

More than 98% of the fake engagements remained active after four weeks, researchers found, and 97% oftheaccountstheyreported forinauthenticactivitywere still active five days later.

NATO StratCom did a similar exercise in 2019 with the accounts of European officials. They found that Twitter is now taking down inauthentic content faster and Facebook has madeithardertocreatefake accounts,pushingmanipulatorstouserealpeopleinstead of bots, which is more costly and less scalable.

“We’ve spent years strengthening our detection systems against fake engagement with a focus on stopping the accounts that have the potential to cause the most harm,” a Facebook company spokesperson said in an email.

But YouTube and Facebook-owned Instagram remain vulnerable, researcherssaid, andTikTok appeared “defenseless.”

“The level of resources they spend matters a lot to how vulnerable they are,” said Sebastian Bay, the lead author of the report. “It means you are unequally protected across social media platforms. It makes thecaseforregulationstronger.It’sasifyouhadcarswith and without seatbelts.”

Researchers said that for the purposes of this experiment they promoted apolitical content, including pictures of dogs and food, to avoid actual impact during the U.S. election season.

BenScott,executivedirector of Reset.tech, a Londonbased initiative that works to combat digital threats to democracy, said the investigation showed how easy it is to manipulate political communication and how little platforms have done to fix long-standing problems.

“What’s most galling is the simplicity of manipulation,” he said. “Basic democratic principles of how societies make decisions get corrupted if you have organized manipulation that is thiswidespreadandthiseasy to do.”

Researchers from the center, a NATO-accredited research group based in Riga, Latvia, paid three Russian companies about $370tobuy337,768fakelikes, views and shares of posts on Facebook, Instagram, Twitter, YouTube and TikTok, includingcontentfromverifiedaccountsofSens.

Chuck Grassley and Chris Murphy.

Grassley’s office confirmed that the Iowa Republican participated in the experiment. Murphy, a Connecticut Democrat, said in a statement that he agreedtoparticipatebecause it’s important to understand howvulnerableevenverified accounts are.

“We’ve seen how easy it is for foreign adversaries to use social media as a tool to manipulate election campaigns and stoke political unrest,” Murphy said.

“It’s clear that social media companies are not doing enough to combat misinformation and paid manipulation on their own platforms and more needs to be done to prevent abuse.”

In an age when much public debate has moved online, widespread social mediamanipulationnotonly distortscommercialmarkets, it is also a threat to national security, NATO StratCom director Janis Sarts said.

“Thesekindsofinauthentic accounts are being hired to trick the algorithm into thinking this is very popular information and thus make divisive things seem more popular and get them to more people. That in turn deepens divisions and thus weakens us as a society,” he explained.

More than 98% of the fake engagements remained active after four weeks, researchers found, and 97% oftheaccountstheyreported forinauthenticactivitywere still active five days later.

NATO StratCom did a similar exercise in 2019 with the accounts of European officials. They found that Twitter is now taking down inauthentic content faster and Facebook has madeithardertocreatefake accounts,pushingmanipulatorstouserealpeopleinstead of bots, which is more costly and less scalable.

“We’ve spent years strengthening our detection systems against fake engagement with a focus on stopping the accounts that have the potential to cause the most harm,” a Facebook company spokesperson said in an email.

But YouTube and Facebook-owned Instagram remain vulnerable, researcherssaid, andTikTok appeared “defenseless.”

“The level of resources they spend matters a lot to how vulnerable they are,” said Sebastian Bay, the lead author of the report. “It means you are unequally protected across social media platforms. It makes thecaseforregulationstronger.It’sasifyouhadcarswith and without seatbelts.”

Researchers said that for the purposes of this experiment they promoted apolitical content, including pictures of dogs and food, to avoid actual impact during the U.S. election season.

BenScott,executivedirector of Reset.tech, a Londonbased initiative that works to combat digital threats to democracy, said the investigation showed how easy it is to manipulate political communication and how little platforms have done to fix long-standing problems.

“What’s most galling is the simplicity of manipulation,” he said. “Basic democratic principles of how societies make decisions get corrupted if you have organized manipulation that is thiswidespreadandthiseasy to do.”

PREVIOUS ARTICLE

PREVIOUS ARTICLE